Steve Scargall

Steve Scargall is a Senior Product Manager and Software Architect at MemVerge , delivering software-defined memory solutions using Compute Express Link (CXL) devices. Steve works with industry leaders in the CXL hardware vendor, Original Equipment Manufacturers (OEMs), Cloud Service Providers (CSPs), Enterprise, and System Integrator spaces to architect cutting-edge solutions. Steve holds a bachelor’s degree in BSc Applied Computer Science and Cybernetics from the University of Reading, UK. He has made significant contributions to the SNIA NVM Programming Technical Work Group, PMDK, NDCTL, IPMCTL, and other memory-centric open-source projects. Steve is the author of Programming Persistent Memory: A Comprehensive Guide for Developers .

Graphify + MemMachine: 79× Token Reduction, Zero Vector Database

I help maintain MemMachine — an open-source long-term memory layer for AI agents. It’s a real codebase: 442 source files, 171 docs, a graph database, a SQL store, an MCP server, a REST API, a Python SDK, and integrations with eight different agent frameworks. When a new contributor asks “where does episodic memory actually get written?”, grep, the tool of choice for many AI coding assistants, doesn’t cut it. The answer threads through five files in three folders, plus a docker-compose service definition and a Helm chart. Each question you ask, it has to search all of these files, using the LLM to semantically understand the question and the files, then piece together an answer. This can take a lot of tokens and consume much of the context window.

Read More

Is Thinking Mode Affecting Your Agentic Workflows?

I jumped on the trend of running local LLMs and agents and was having a lot of fun until my agents kept failing, timing out, and just stopping without any obvious reason. I tried PaperClip + ZeroClaw, PaperClip + Hermes-Agent, and Hermes-Agent + Hermes-Workspace with Qwen 3.6 and Gemma 4 models (various sizes and quantization levels). All of them failed in the same way at some point in the workflow with almost nothing reported in the logs to indicate what was happening. Some tasks completed without any problem, but most did not, often leaving me to wonder what was going on. After many hours of debugging and reading many forums, I finally found that this was a model serving configuration trap that catches many people the first time they self-host a reasoning model.

Read More

How To Run ZeroClaw in Docker with local LLMs (Qwen3 on an NVIDIA DGX Spark)

ZeroClaw is an open-source agent runtime. By default it expects a frontier model API key such as Claude, OpenAI, etc. This guide shows how to use a local Qwen3.6 model served by vLLM on an NVIDIA DGX Spark, routed through LiteLLM, with ZeroClaw and Firecrawl running in Docker on a separate host.

It also documents the onboarding bug I hit on a fresh install in v0.7.4 — ZeroClaw issue #6123 — and the config-only workaround.

Read More

Run Free LLMs at Scale: LiteLLM Gateway with Groq, NVIDIA NIM, OpenRouter, and Local vLLM

Introduction

Running large language models is increasingly affordable — but “affordable” rarely means “free, all the time, for every request.” Cloud providers each come with their own rate limits, daily quotas, and occasional model deprecations. Local hardware is fast and private, but not always available (DGX Spark powered down, model being updated, VRAM needed elsewhere). Somewhere between “I have an API key” and “my agents work reliably at scale” is a configuration problem that most guides skip over entirely.

Read More

vLLM Recipe: RedHatAI/Qwen3.6-35B-A3B-NVFP4 on DGX Spark

This is a vLLM Recipe - a production-ready Docker Compose configuration for running open-weight models on local hardware. It documents the exact setup, configuration rationale, and benchmark results so you can get a model running quickly. You are welcome to change the parameters to suit your workloads. This worked for me, so I hope you find it helpful.

This recipe covers Qwen3.6-35B-A3B-NVFP4 - a Mixture-of-Experts model with 35B total parameters but only ~3B active at inference - quantized to NVFP4 by Red Hat AI and running on the NVIDIA DGX Spark (my GigaByte AI Top Atom) with a GB10 Blackwell GPU and 128 GB of unified CPU/GPU memory.

Read More

Self-Hosting Firecrawl on Ubuntu 25.04 with Docker Compose

Modern AI agents — Claude Code, Codex, OpenClaw, Hermes-Agent, and custom LangChain pipelines — need a way to read the web. Not raw HTML full of navigation debris, cookie banners, and JavaScript noise, but clean structured text that a language model can actually reason about. Firecrawl is the missing piece: an open-source web scraping and crawling API that fetches any URL and returns clean Markdown, ready to drop straight into a context window or a RAG pipeline.

Read MoreBuilding an Agentic Team for an Open Source Project with Claude Code

A core engineer on MemMachine — the one who owned the Semantic Memory subsystem — left the project. The codebase didn’t grow any less complex overnight, but the human attention available to maintain it did. That’s a familiar shape of problem in any open source project, and it’s the exact shape where a well-designed Claude Code agent team earns its keep.

This post documents what I built: a 22-agent maintenance team that lives entirely inside MemMachine’s repository, coordinates via Claude Code’s experimental Agent Teams runtime, and operates under a design I can reproduce for any existing repository with real code. The agents don’t push code, don’t sign commits, don’t merge pull requests, and don’t cut releases — humans still gatekeep every consequential action. What the agents do do is the tedious and error-prone middle of software maintenance: triage, spec drafting, implementation, QA, security review, docs, dependency and upstream tracking.

Read More

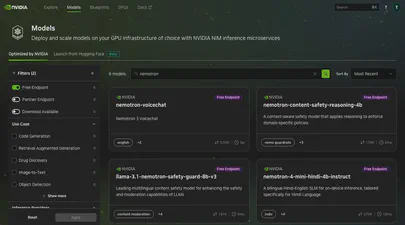

Using the API to Find Free Hosted Models on NVIDIA Builder

The NVIDIA Developer Program provides access to a wide catalog of AI models through NVIDIA Inference Microservices (NIM), offering an OpenAI-compatible API. You can browse and discover available models at build.nvidia.com/explore/discover .

If you want to find models with free hosted endpoints in the browser, you can enable the “Free Endpoint” filter

on the model catalog page. But what if you need that information programmatically – in a script, a CI pipeline, or as part of an automated workflow? The browser filter is not accessible through the API, and the /v1/models endpoint does not distinguish between free hosted models and everything else.

Reflections and What's Next: Lessons from Building lib3mf-rs

Series: Building lib3mf-rs

This post is part of a 5-part series on building a comprehensive 3MF library in Rust:

- Part 1: My Journey Building a 3MF Native Rust Library from Scratch

- Part 2: The Library Landscape - Why Build Another One?

- Part 3: Into the 3MF Specification Wilderness - Reading 1000+ Pages of Specifications

- Part 4: Design for Developers - Features, Flags, and the CLI

- Part 5: Reflections and What’s Next - Lessons from Building lib3mf-rs

On February 4th, 2026, lib3mf-rs is a published open-source project with complete specification coverage, available for anyone to use.

Read More

Design for Developers: Features, Flags, and the CLI

Series: Building lib3mf-rs

This post is part of a 5-part series on building a comprehensive 3MF library in Rust:

- Part 1: My Journey Building a 3MF Native Rust Library from Scratch

- Part 2: The Library Landscape - Why Build Another One?

- Part 3: Into the 3MF Specification Wilderness - Reading 1000+ Pages of Specifications

- Part 4: Design for Developers - Features, Flags, and the CLI

- Part 5: Reflections and What’s Next - Lessons from Building lib3mf-rs

Understanding the specifications was one thing. Designing a library that developers would actually want to use? That was another challenge entirely. I’ve worked on many libraries over the years, and I’ve learned a lot about what makes a good library, for example the Persistent Memory Development Kit . The difference is understanding how Rust does things.

Read More

Into the 3MF Specification Wilderness: Reading 1000+ Pages of Specifications

Series: Building lib3mf-rs

This post is part of a 5-part series on building a comprehensive 3MF library in Rust:

- Part 1: My Journey Building a 3MF Native Rust Library from Scratch

- Part 2: The Library Landscape - Why Build Another One?

- Part 3: Into the 3MF Specification Wilderness - Reading 1000+ Pages of Specifications

- Part 4: Design for Developers - Features, Flags, and the CLI

- Part 5: Reflections and What’s Next - Lessons from Building lib3mf-rs

“How hard can it be? It’s just a file format.”

That’s what I thought before I started reading the 3MF specifications. After reading, re-reading, and getting AI to help summarize and dig deeper into the interpretations and understandings, I was ready to begin.

Read More

The Library Landscape: Why Build Another One?

Series: Building lib3mf-rs

This post is part of a 5-part series on building a comprehensive 3MF library in Rust:

- Part 1: My Journey Building a 3MF Native Rust Library from Scratch

- Part 2: The Library Landscape - Why Build Another One?

- Part 3: Into the 3MF Specification Wilderness - Reading 1000+ Pages of Specifications

- Part 4: Design for Developers - Features, Flags, and the CLI

- Part 5: Reflections and What’s Next - Lessons from Building lib3mf-rs

“Why not just use the existing library?”

It’s a fair question. One I asked myself many times during the early days of this project. The 3MF Consortium maintains lib3MF , a comprehensive C++ implementation used by major companies in additive manufacturing. Why build another one?

Read More

My Journey Building a 3MF Native Rust Library from Scratch

For the past few years, I’ve been getting more and more into 3D printing as a hobbyist. Like everyone, I started with one, a Bambu Lab X1 Carbon, which has now grown to three printers. I find the hobby fascinating as it entangles software, firmware, hardware, physics, and materials science.

As a software engineer, I’m naturally drawn to the software side of things (Slicer and Firmware). But what interests me most, is how the software interacts with the hardware and the materials. How the slicer translates the 3D model into instructions for the printer (G-Code). How the printer executes those instructions. How the materials behave under the printer’s control.

Read More

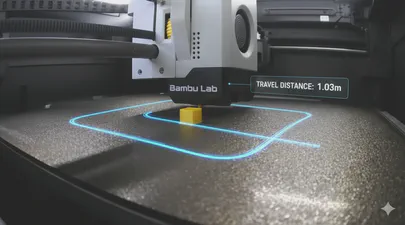

I Added a Feature to OrcaSlicer to Show Travel Distance and Moves

OrcaSlicer is a powerful and popular slicer for 3D printers, known for its rich feature set and active development community. In this blog post, we’ll take a closer look at a new feature I proposed and implemented that provides more insight into your prints: the display of total travel distance and the number of travel moves. See the feat: Display travel distance and move count in G-code summary for more details.

Read More

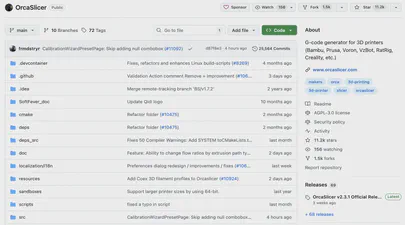

How to Build OrcaSlicer from Source on macOS 15 Sequoia - A Step-by-Step Guide

Building OrcaSlicer from source on macOS 15 (15.6.1 Sequoia) can be straightforward, but recent changes in macOS, Xcode, and CMake require some extra care. This guide updates the official instructions with important tips and fixes from this GitHub issue to avoid common build issues.

For this article, we will be using this build system:

- Apple MacBook Pro M1 (Apple Silicon)

- macOS 15.6.1 (Sequoia)

- Orca Slicer 2.3.1 from https://github.com/SoftFever/OrcaSlicer

Prerequisites

Before you start, ensure you have the following installed:

Read More

How to Build acpidump from Source and use it to Debug Complex CXL and PCI Issues

This article is a detailed guide on how to build the latest version of the acpidump tool from its source code. While many Linux distributions, like Ubuntu, offer a packaged version of this utility, it’s often outdated. For developers and enthusiasts working with modern hardware features, particularly those related to Compute Express Link (CXL), having the most current version is essential.

Before you begin, it’s important to remove any old, conflicting versions of the tools. If you have previously installed the acpica-tools package from your distribution’s repository, you should remove it to prevent conflicts.

Is Your Application Really Using Persistent Memory? Here’s How to Tell.

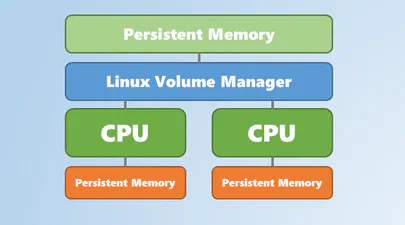

Persistent memory (PMEM), especially when accessed via technologies like CXL, promises the best of both worlds: DRAM-like speed with the durability of an SSD. When you set up a filesystem like XFS or EXT4 in FSDAX (File System Direct Access) mode on a PMEM device, you’re paving a superhighway for your applications, allowing them to map files directly into their address space and bypass the kernel’s page cache entirely.

But here’s the crucial question: after all the setup and configuration, how do you prove that your application’s data is physically residing on the PMEM device and not just in regular RAM? I’ve run into this question myself, so I wrote a small Python script to get a definitive answer using SQLite3 as an example application. However, before we proceed with the script, let’s examine how you can verify this manually.

Read More

How to Confirm Virtual to Physical Memory Mappings for PMem and FSDAX Files

Are you curious whether your application’s memory-mapped files are really using Intel Optane Persistent Memory (PMem), Compute Express Link (CXL) Non-Volatile Memory Modules (NV-CMM), or another DAX-enabled persistent memory device? Want to understand how virtual memory maps onto physical, non-volatile regions? Let’s use easily adaptable scripts in both Python and C to confirm this on your Linux system, definitively.

Why Does This Matter?

With the advent of persistent memory and DAX (Direct Access) filesystems, applications can memory-map files directly onto PMem, bypassing the traditional DRAM page cache. This promises significant performance and durability improvements for data-intensive workloads and databases, such as SQLite, Redis, and others.

Read More

CXL Memory NUMA Node Mapping with Sub-NUMA Clustering (SNC) on Linux

CXL (Compute Express Link) memory devices are revolutionizing server architectures, but they also introduce new NUMA complexity, especially when advanced memory configurations, such as Sub-NUMA Clustering (SNC), are enabled. One of the most confusing issues is the mismatch between NUMA node numbers reported by CXL sysfs attributes and those used by Linux memory management tools.

This blog post walks through a real-world scenario, complete with command outputs and diagrams, to help you understand and resolve the CXL NUMA node mapping issue with SNC enabled.

Read More

CXL Device & Fabric Buyer's Guide: A List of GA Components

Last Updated: June 2, 2026

This guide provides a curated list of generally available (GA) Compute Express Link (CXL) devices, fabric components, and memory appliances. It is a technical resource for engineers, architects, and hardware specialists looking to identify and compare CXL memory expansion modules, switches, and full system-level appliances from leading vendors. The tables below detail market-ready components, focusing on the specifications required to design and build CXL-enabled infrastructure.

Read More

CXL Server Buyer's Guide: A Complete List of GA Platforms

Last Updated: June 2, 2026

This quick-reference guide provides a definitive, up-to-date list of generally available (GA) Compute Express Link (CXL) servers from major OEMs including Dell, HPE, Lenovo, and Supermicro. It is designed for data center architects, engineers, and IT decision-makers who need to identify and compare server platforms that support CXL 1.1 and CXL 2.0 for memory expansion and pooling.

Compute Express Link (CXL) is an open-standard interconnect that enables high-speed, low-latency communication between processors and attached devices such as accelerators and memory expanders. CXL 2.0 adoption has accelerated significantly since 2025, with all major CPU platforms now supporting it natively and the first hyperscale cloud deployments validated in production.

Read More

Your Personal Codespace: Self-Host VS Code on Any Server

GitHub Codespaces and other cloud IDEs have revolutionized development, offering a complete VS Code environment that runs on a remote server and is accessible from any browser. It’s a game-changer for productivity and flexibility.

But what if you could have that same powerful, seamless experience on your own terms?

This guide will show you how to build your very own private Codespace, replicating the convenience of the GitHub experience on any server you control—be it a machine in your home lab, a dedicated server, or a budget-friendly cloud VM. We’ll explore two distinct paths to get you up and running with a persistent, browser-based VS Code instance on Ubuntu 24.04, complete with AI assistants like Gemini and GitHub Copilot to boost your workflow.

Read More

Unlock Your CXL Memory: How to Switch from NUMA (System-RAM) to Direct Access (DAX) Mode

As a Linux System Administrator working with Compute Express Link (CXL) memory devices, you should be aware that as of Linux Kernel 6.3, Type 3 CXL.mem devices are now automatically brought online as memory-only NUMA nodes. While this can be beneficial for most situations, it might not be ideal if your application is designed to directly manage the CXL memory as a DAX (Direct Access) device using mmap().

This blog post will explain this behavior and provide a step-by-step guide on how to convert a CXL memory device from a memory-only NUMA node back to DAX mode, allowing applications to mmap the underlying /dev/daxX.Y device. We’ll also cover troubleshooting steps if the memory is actively in use by the kernel or other processes.

Fastfetch: The Speedy Successor Neofetch Replacement Your Ubuntu Terminal Needs

If you love customizing your Linux terminal and getting a quick, visually appealing overview of your system specs, you might have used neofetch in the past. However, neofetch is now deprecated and no longer actively maintained. A fantastic, actively maintained alternative is Fastfetch – known for its speed, extensive customization options, and feature set.

While you might be able to install Fastfetch on Ubuntu 22.04 (Jammy Jellyfish) using the standard sudo apt install fastfetch, the version available in the default Ubuntu repositories is often outdated. To get the latest features, bug fixes, and performance improvements, you’ll want to use a different method.

How I Created a Custom ChatGPT Trained on the CXL Specification Documents

If you’re working with Compute Express Link (CXL) and wish you had an AI assistant trained on all the different versions of the specification—1.0, 1.1, 2.0, 3.0, 3.1… you’re in luck.

Whether you’re a CXL device vendor, a firmware engineer, a Linux Kernel developer, a memory subsystem architect, a hardware validation engineer, or even an application developer working on CXL tools and utilities, chances are you’ve had to reference the CXL spec at some point. And if you have, you already know: these documents are dense, extremely technical, and constantly evolving.

Read More

I Turned Myself Into an Action Figure

Part of being in tech, especially in emerging memory technology, is constantly switching between the serious and the surreal. One day you’re in kernel debug mode, the next you’re explaining complex system architectures on a whiteboard, and then suddenly you’re jumping on the latest craze such as making yourself into an action figure.

It’s fun. It’s human. And honestly? It’s a reminder not to take yourself too seriously. (Even if your job title suggests differently)

Read More

Linux Kernel 6.14 is Released: This is What's New for Compute Express Link (CXL)

The Linux Kernel 6.14 release brings several improvements and additions related to Compute Express Link (CXL) technology.

CXL related changes from Kernel v6.13 to v6.14

Here is the detailed list of all commits merged into the 6.14 Kernel for CXL and DAX. This list was generated by the Linux Kernel CXL Feature Tracker .

- Merge tag ‘cxl-for-6.14’ of git://git.kernel.org/pub/scm/linux/kernel/git/cxl/cxl

- cxl/core/regs: Refactor out functions to count regblocks of given type

- cxl/events: Update Memory Module Event Record to CXL spec rev 3.1

- cxl/events: Update DRAM Event Record to CXL spec rev 3.1

- cxl/events: Update General Media Event Record to CXL spec rev 3.1

- cxl/events: Add Component Identifier formatting for CXL spec rev 3.1

- cxl/events: Update Common Event Record to CXL spec rev 3.1

- Merge 6.13-rc7 into driver-core-next

- driver core: Correct API device_for_each_child_reverse_from() prototype

- cxl/pmem: Remove is_cxl_nvdimm_bridge()

- cxl/pmem: Replace match_nvdimm_bridge() with API device_match_type()

- driver core: Constify API device_find_child() and adapt for various usages

- cxl/pci: Add CXL Type 1/2 support to cxl_dvsec_rr_decode()

- cxl/region: Fix region creation for greater than x2 switches

- cxl/pci: Check dport->regs.rcd_pcie_cap availability before accessing

- cxl/pci: Fix potential bogus return value upon successful probing

- module: Convert symbol namespace to string literal

- Merge tag ‘driver-core-6.13-rc1’ of git://git.kernel.org/pub/scm/linux/kernel/git/gregkh/driver-core

- Merge tag ‘cxl-for-6.13’ of git://git.kernel.org/pub/scm/linux/kernel/git/cxl/cxl

- Merge branch ‘cxl/for-6.13/dcd-prep’ into cxl-for-next

- cxl/region: Refactor common create region code

- cxl/hdm: Use guard() in cxl_dpa_set_mode()

- cxl/pci: Delay event buffer allocation

- sysfs: treewide: constify attribute callback of bin_is_visible()

- Merge branch ‘cxl/for-6.12/printf’ into cxl-for-next

- cxl/cdat: Use %pra for dpa range outputs

- cxl: downgrade a warning message to debug level in cxl_probe_component_regs()

- cxl/pci: Add sysfs attribute for CXL 1.1 device link status

- cxl/core/regs: Add rcd_pcie_cap initialization

- cxl/port: Prevent out-of-order decoder allocation

- cxl/port: Fix use-after-free, permit out-of-order decoder shutdown

- cxl/acpi: Ensure ports ready at cxl_acpi_probe() return

- cxl/port: Fix cxl_bus_rescan() vs bus_rescan_devices()

- cxl/port: Fix CXL port initialization order when the subsystem is built-in

- cxl/events: Fix Trace DRAM Event Record

- cxl/core: Return error when cxl_endpoint_gather_bandwidth() handles a non-PCI device

- move asm/unaligned.h to linux/unaligned.h

- Merge tag ‘cxl-for-6.12’ of git://git.kernel.org/pub/scm/linux/kernel/git/cxl/cxl

- cxl: Calculate region bandwidth of targets with shared upstream link

- cxl: Preserve the CDAT access_coordinate for an endpoint

- cxl: Fix comment regarding cxl_query_cmd() return data

- cxl: Convert cxl_internal_send_cmd() to use ‘struct cxl_mailbox’ as input

- cxl: Move mailbox related bits to the same context

- cxl: move cxl headers to new include/cxl/ directory

- cxl/region: Remove lock from memory notifier callback

- cxl/pci: simplify the check of mem_enabled in cxl_hdm_decode_init()

- cxl/pci: Check Mem_info_valid bit for each applicable DVSEC

- cxl/pci: Remove duplicated implementation of waiting for memory_info_valid

- cxl/pci: Fix to record only non-zero ranges

- mm: make range-to-target_node lookup facility a part of numa_memblks

- cxl/pci: Remove duplicate host_bridge->native_aer checking

- cxl/pci: cxl_dport_map_rch_aer() cleanup

- cxl/pci: Rename cxl_setup_parent_dport() and cxl_dport_map_regs()

- cxl/port: Refactor __devm_cxl_add_port() to drop goto pattern

- cxl/port: Use scoped_guard()/guard() to drop device_lock() for cxl_port

- cxl/port: Use __free() to drop put_device() for cxl_port

- cxl: Remove duplicate included header file core.h

- cxl/port: Convert to use ERR_CAST()

- cxl/pci: Get AER capability address from RCRB only for RCH dport

- Merge tag ‘cxl-for-6.11’ of git://git.kernel.org/pub/scm/linux/kernel/git/cxl/cxl

- Merge tag ‘driver-core-6.11-rc1’ of git://git.kernel.org/pub/scm/linux/kernel/git/gregkh/driver-core

- cxl/core/pci: Move reading of control register to immediately before usage

- Merge branch ‘for-6.11/xor_fixes’ into cxl-for-next

- cxl: Remove defunct code calculating host bridge target positions

- cxl/region: Verify target positions using the ordered target list

- cxl: Restore XOR’d position bits during address translation

- cxl/core: Fold cxl_trace_hpa() into cxl_dpa_to_hpa()

- cxl/memdev: Replace ENXIO with EBUSY for inject poison limit reached

- cxl/acpi: Warn on mixed CXL VH and RCH/RCD Hierarchy

- cxl/core: Fix incorrect vendor debug UUID define

- driver core: have match() callback in struct bus_type take a const *

- cxl/region: Simplify cxl_region_nid()

- cxl/region: Support to calculate memory tier abstract distance

- cxl/region: Fix a race condition in memory hotplug notifier

- cxl: add missing MODULE_DESCRIPTION() macros

- cxl/events: Use a common struct for DRAM and General Media events

- cxl: documentation: add missing files to cxl driver-api

- cxl/region: check interleave capability

- cxl/region: Avoid null pointer dereference in region lookup

- cxl/mem: Fix no cxl_nvd during pmem region auto-assembling

- cxl/region: Fix memregion leaks in devm_cxl_add_region()

- tracing/treewide: Remove second parameter of __assign_str()

- Merge tag ‘pci-v6.10-changes’ of git://git.kernel.org/pub/scm/linux/kernel/git/pci/pci

- Merge tag ‘cxl-for-6.10’ of git://git.kernel.org/pub/scm/linux/kernel/git/cxl/cxl

- cxl: Add post-reset warning if reset results in loss of previously committed HDM decoders

- PCI/CXL: Move CXL Vendor ID to pci_ids.h

- Merge remote-tracking branch ‘cxl/for-6.10/cper’ into cxl-for-next

- cxl/pci: Process CPER events

- cxl/region: Convert cxl_pmem_region_alloc to scope-based resource management

- cxl/acpi: Cleanup __cxl_parse_cfmws()

- cxl/region: Fix cxlr_pmem leaks

- Merge remote-tracking branch ‘cxl/for-6.10/dpa-to-hpa’ into cxl-for-next

- cxl/core: Add region info to cxl_general_media and cxl_dram events

- cxl/region: Move cxl_trace_hpa() work to the region driver

- cxl/region: Move cxl_dpa_to_region() work to the region driver

- cxl/trace: Correct DPA field masks for general_media & dram events

- Merge remote-tracking branch ‘cxl/for-6.10/add-log-mbox-cmds’ into cxl-for-next

- cxl/hdm: Debug, use decoder name function

- cxl: Fix use of phys_to_target_node() for x86

- cxl/hdm: dev_warn() on unsupported mixed mode decoder

- cxl/hdm: Add debug message for invalid interleave granularity

- cxl: Fix compile warning for cxl_security_ops extern

- cxl/mbox: Add Clear Log mailbox command

- cxl/mbox: Add Get Log Capabilities and Get Supported Logs Sub-List commands

- cxl: Fix cxl_endpoint_get_perf_coordinate() support for RCH

- cxl/core: Fix potential payload size confusion in cxl_mem_get_poison()

- Merge tag ‘cxl-fixes-6.9-rc4’ of git://git.kernel.org/pub/scm/linux/kernel/git/cxl/cxl

- cxl: Add checks to access_coordinate calculation to fail missing data

- cxl: Consolidate dport access_coordinate ->hb_coord and ->sw_coord into ->coord

- cxl: Fix incorrect region perf data calculation

- cxl: Fix retrieving of access_coordinates in PCIe path

- cxl: Remove checking of iter in cxl_endpoint_get_perf_coordinates()

- cxl/core: Fix initialization of mbox_cmd.size_out in get event

- cxl/core/regs: Fix usage of map->reg_type in cxl_decode_regblock() before assigned

- cxl/mem: Fix for the index of Clear Event Record Handle

- cxl: remove CONFIG_CXL_PMU entry in drivers/cxl/Kconfig

- Merge tag ’trace-v6.9-2’ of git://git.kernel.org/pub/scm/linux/kernel/git/trace/linux-trace

- cxl/trace: Properly initialize cxl_poison region name

- Merge branch ‘for-6.9/cxl-fixes’ into for-6.9/cxl

- Merge branch ‘for-6.9/cxl-einj’ into for-6.9/cxl

- Merge branch ‘for-6.9/cxl-qos’ into for-6.9/cxl

- lib/firmware_table: Provide buffer length argument to cdat_table_parse()

- cxl/pci: Get rid of pointer arithmetic reading CDAT table

- cxl/pci: Rename DOE mailbox handle to doe_mb

- cxl: Fix the incorrect assignment of SSLBIS entry pointer initial location

- cxl/core: Add CXL EINJ debugfs files

- cxl/region: Deal with numa nodes not enumerated by SRAT

- cxl/region: Add memory hotplug notifier for cxl region

- cxl/region: Add sysfs attribute for locality attributes of CXL regions

- cxl/region: Calculate performance data for a region

- cxl: Set cxlmd->endpoint before adding port device

- cxl: Move QoS class to be calculated from the nearest CPU

- cxl: Split out host bridge access coordinates

- cxl: Split out combine_coordinates() for common shared usage

- ACPI: HMAT / cxl: Add retrieval of generic port coordinates for both access classes

- cxl/acpi: Fix load failures due to single window creation failure

- Merge branch ‘for-6.8/cxl-cper’ into for-6.8/cxl

- acpi/ghes: Remove CXL CPER notifications

- cxl/pci: Fix disabling memory if DVSEC CXL Range does not match a CFMWS window

- cxl: Fix sysfs export of qos_class for memdev

- cxl: Remove unnecessary type cast in cxl_qos_class_verify()

- cxl: Change ‘struct cxl_memdev_state’ *_perf_list to single ‘struct cxl_dpa_perf’

- cxl/region: Allow out of order assembly of autodiscovered regions

- cxl/region: Handle endpoint decoders in cxl_region_find_decoder()

- Merge tag ’efi-fixes-for-v6.8-1’ of git://git.kernel.org/pub/scm/linux/kernel/git/efi/efi

- cxl/trace: Remove unnecessary memcpy’s

- cxl/pci: Skip to handle RAS errors if CXL.mem device is detached

- cxl/region:Fix overflow issue in alloc_hpa()

- cxl/pci: Skip irq features if MSI/MSI-X are not supported

- cxl/core: use sysfs_emit() for attr’s _show()

- Merge branch ‘for-6.8/cxl-cper’ into for-6.8/cxl

- cxl/pci: Register for and process CPER events

- cxl/events: Create a CXL event union

- cxl/events: Separate UUID from event structures

- cxl/events: Remove passing a UUID to known event traces

- cxl/events: Create common event UUID defines

- Merge branch ‘for-6.7/cxl’ into for-6.8/cxl

- Merge branch ‘for-6.8/cxl-misc’ into for-6.8/cxl

- Merge branch ‘for-6.8/cxl-cdat’ into for-6.8/cxl

- cxl/events: Promote CXL event structures to a core header

- cxl: Refactor to use __free() for cxl_root allocation in cxl_endpoint_port_probe()

- cxl: Refactor to use __free() for cxl_root allocation in cxl_find_nvdimm_bridge()

- cxl: Fix device reference leak in cxl_port_perf_data_calculate()

- cxl: Convert find_cxl_root() to return a ‘struct cxl_root *’

- cxl: Introduce put_cxl_root() helper

- cxl/port: Fix missing target list lock

- cxl/port: Fix decoder initialization when nr_targets > interleave_ways

- cxl/region: fix x9 interleave typo

- cxl/trace: Pass UUID explicitly to event traces

- Merge branch ‘for-6.8/cxl-cdat’ into for-6.8/cxl

- cxl/region: use %pap format to print resource_size_t

- cxl/region: Add dev_dbg() detail on failure to allocate HPA space

- cxl: Check qos_class validity on memdev probe

- cxl: Export sysfs attributes for memory device QoS class

- cxl: Store QTG IDs and related info to the CXL memory device context

- cxl: Compute the entire CXL path latency and bandwidth data

- cxl: Add helper function that calculate performance data for downstream ports

- cxl: Store the access coordinates for the generic ports

- cxl: Calculate and store PCI link latency for the downstream ports

- cxl: Add support for _DSM Function for retrieving QTG ID

- cxl: Add callback to parse the SSLBIS subtable from CDAT

- cxl: Add callback to parse the DSLBIS subtable from CDAT

- cxl: Add callback to parse the DSMAS subtables from CDAT

- cxl: Fix unregister_region() callback parameter assignment

- cxl/pmu: Ensure put_device on pmu devices

- cxl/cdat: Free correct buffer on checksum error

- cxl/hdm: Fix dpa translation locking

- cxl: Add Support for Get Timestamp

- cxl/memdev: Hold region_rwsem during inject and clear poison ops

- cxl/core: Always hold region_rwsem while reading poison lists

- cxl/hdm: Fix a benign lockdep splat

- cxl/pci: Change CXL AER support check to use native AER

- cxl/hdm: Remove broken error path

- cxl/hdm: Fix && vs || bug

- Merge branch ‘for-6.7/cxl-commited’ into cxl/next

- Merge branch ‘for-6.7/cxl’ into cxl/next

- Merge branch ‘for-6.7/cxl-qtg’ into cxl/next

- Merge branch ‘for-6.7/cxl-rch-eh’ into cxl/next

- cxl: Add support for reading CXL switch CDAT table

- cxl: Add checksum verification to CDAT from CXL

- cxl: Export QTG ids from CFMWS to sysfs as qos_class attribute

- cxl: Add decoders_committed sysfs attribute to cxl_port

- cxl: Add cxl_decoders_committed() helper

- cxl/core/regs: Rework cxl_map_pmu_regs() to use map->dev for devm

- cxl/core/regs: Rename phys_addr in cxl_map_component_regs()

- cxl/pci: Disable root port interrupts in RCH mode

- cxl/pci: Add RCH downstream port error logging

- cxl/pci: Map RCH downstream AER registers for logging protocol errors

- cxl/pci: Update CXL error logging to use RAS register address

- PCI/AER: Refactor cper_print_aer() for use by CXL driver module

- cxl/pci: Add RCH downstream port AER register discovery

- cxl/port: Remove Component Register base address from struct cxl_port

- cxl/pci: Remove Component Register base address from struct cxl_dev_state

- cxl/hdm: Use stored Component Register mappings to map HDM decoder capability

- cxl/pci: Store the endpoint’s Component Register mappings in struct cxl_dev_state

- cxl/port: Pre-initialize component register mappings

- cxl/port: Rename @comp_map to @reg_map in struct cxl_register_map

- cxl/port: Fix @host confusion in cxl_dport_setup_regs()

- cxl/core/regs: Rename @dev to @host in struct cxl_register_map

- cxl/port: Fix delete_endpoint() vs parent unregistration race

- cxl/region: Fix x1 root-decoder granularity calculations

- cxl/region: Fix cxl_region_rwsem lock held when returning to user space

- cxl/region: Use cxl_calc_interleave_pos() for auto-discovery

- cxl/region: Calculate a target position in a region interleave

- cxl/region: Prepare the decoder match range helper for reuse

- cxl/mbox: Remove useless cast in cxl_mem_create_range_info()

- cxl/region: Do not try to cleanup after cxl_region_setup_targets() fails

- cxl/mem: Fix shutdown order

- cxl/memdev: Fix sanitize vs decoder setup locking

- cxl/pci: Fix sanitize notifier setup

- cxl/pci: Clarify devm host for memdev relative setup

- cxl/pci: Remove inconsistent usage of dev_err_probe()

- cxl/pci: Remove hardirq handler for cxl_request_irq()

- cxl/pci: Cleanup ‘sanitize’ to always poll

- cxl/pci: Remove unnecessary device reference management in sanitize work

- cxl/acpi: Annotate struct cxl_cxims_data with __counted_by

- cxl/port: Fix cxl_test register enumeration regression

- cxl/pci: Update comment

- cxl/port: Quiet warning messages from the cxl_test environment

- cxl/region: Refactor granularity select in cxl_port_setup_targets()

- cxl/region: Match auto-discovered region decoders by HPA range

- cxl/mbox: Fix CEL logic for poison and security commands

- cxl/pci: Replace host_bridge->native_aer with pcie_aer_is_native()

- cxl/pci: Fix appropriate checking for _OSC while handling CXL RAS registers

- cxl/memdev: Only show sanitize sysfs files when supported

- cxl/memdev: Document security state in kern-doc

- cxl/acpi: Return ‘rc’ instead of ‘0’ in cxl_parse_cfmws()

- cxl/acpi: Fix a use-after-free in cxl_parse_cfmws()

- cxl/mem: Fix a double shift bug

- cxl: fix CONFIG_FW_LOADER dependency

- cxl: Fix one kernel-doc comment

- cxl/pci: Use correct flag for sanitize polling

- Merge branch ‘for-6.5/cxl-rch-eh’ into for-6.5/cxl

- Merge branch ‘for-6.5/cxl-perf’ into for-6.5/cxl

- perf: CXL Performance Monitoring Unit driver

- Merge branch ‘for-6.5/cxl-region-fixes’ into for-6.5/cxl

- Merge branch ‘for-6.5/cxl-type-2’ into for-6.5/cxl

- Merge branch ‘for-6.5/cxl-fwupd’ into for-6.5/cxl

- Merge branch ‘for-6.5/cxl-background’ into for-6.5/cxl

- cxl: add a firmware update mechanism using the sysfs firmware loader

- cxl/mem: Support Secure Erase

- cxl/mem: Wire up Sanitization support

- cxl/mbox: Add sanitization handling machinery

- cxl/mem: Introduce security state sysfs file

- cxl/mbox: Allow for IRQ_NONE case in the isr

- Revert “cxl/port: Enable the HDM decoder capability for switch ports”

- cxl/memdev: Formalize endpoint port linkage

- cxl/pci: Unconditionally unmask 256B Flit errors

- cxl/region: Manage decoder target_type at decoder-attach time

- cxl/hdm: Default CXL_DEVTYPE_DEVMEM decoders to CXL_DECODER_DEVMEM

- cxl/port: Rename CXL_DECODER_{EXPANDER, ACCELERATOR} => {HOSTONLYMEM, DEVMEM}

- cxl/memdev: Make mailbox functionality optional

- cxl/mbox: Move mailbox related driver state to its own data structure

- cxl: Remove leftover attribute documentation in ‘struct cxl_dev_state’

- cxl: Fix kernel-doc warnings

- cxl/regs: Clarify when a ‘struct cxl_register_map’ is input vs output

- cxl/region: Fix state transitions after reset failure

- cxl/region: Flag partially torn down regions as unusable

- cxl/region: Move cache invalidation before region teardown, and before setup

- cxl/port: Store the downstream port’s Component Register mappings in struct cxl_dport

- cxl/port: Store the port’s Component Register mappings in struct cxl_port

- cxl/pci: Early setup RCH dport component registers from RCRB

- cxl/mem: Prepare for early RCH dport component register setup

- cxl/regs: Remove early capability checks in Component Register setup

- cxl/port: Remove Component Register base address from struct cxl_dport

- cxl/acpi: Directly bind the CEDT detected CHBCR to the Host Bridge’s port

- cxl/acpi: Move add_host_bridge_uport() after cxl_get_chbs()

- cxl/pci: Refactor component register discovery for reuse

- cxl/core/regs: Add @dev to cxl_register_map

- cxl: Rename ‘uport’ to ‘uport_dev’

- cxl: Rename member @dport of struct cxl_dport to @dport_dev

- cxl/rch: Prepare for caching the MMIO mapped PCIe AER capability

- cxl/acpi: Probe RCRB later during RCH downstream port creation

- cxl/pci: Find and register CXL PMU devices

- cxl: Add functions to get an instance of / count regblocks of a given type

- cxl: Explicitly initialize resources when media is not ready

- cxl/mbox: Add background cmd handling machinery

- cxl/pci: Introduce cxl_request_irq()

- cxl/pci: Allocate irq vectors earlier during probe

- cxl/port: Fix NULL pointer access in devm_cxl_add_port()

- cxl: Move cxl_await_media_ready() to before capacity info retrieval

- cxl: Wait Memory_Info_Valid before access memory related info

- cxl/port: Enable the HDM decoder capability for switch ports

- cxl: Add missing return to cdat read error path

- Merge tag ‘cxl-for-6.4’ of git://git.kernel.org/pub/scm/linux/kernel/git/cxl/cxl

- Merge tag ‘driver-core-6.4-rc1’ of git://git.kernel.org/pub/scm/linux/kernel/git/gregkh/driver-core

- cxl/mbox: Update CMD_RC_TABLE

- Merge branch ‘for-6.3/cxl-autodetect-fixes’ into for-6.4/cxl

- Merge branch ‘for-6.4/cxl-poison’ into for-6.4/cxl

- cxl/mem: Add debugfs attributes for poison inject and clear

- cxl/memdev: Trace inject and clear poison as cxl_poison events

- cxl/memdev: Warn of poison inject or clear to a mapped region

- cxl/memdev: Add support for the Clear Poison mailbox command

- cxl/memdev: Add support for the Inject Poison mailbox command

- cxl/trace: Add an HPA to cxl_poison trace events

- cxl/region: Provide region info to the cxl_poison trace event

- cxl/memdev: Add trigger_poison_list sysfs attribute

- cxl/trace: Add TRACE support for CXL media-error records

- cxl/mbox: Add GET_POISON_LIST mailbox command

- cxl/mbox: Initialize the poison state

- cxl/mbox: Restrict poison cmds to debugfs cxl_raw_allow_all

- cxl/mbox: Deprecate poison commands

- cxl/port: Fix port to pci device assumptions in read_cdat_data()

- cxl/pci: Rightsize CDAT response allocation

- cxl/pci: Simplify CDAT retrieval error path

- cxl/pci: Use CDAT DOE mailbox created by PCI core

- cxl/pci: Use synchronous API for DOE

- cxl/hdm: Add more HDM decoder debug messages at startup

- cxl/port: Scan single-target ports for decoders

- cxl/core: Drop unused io-64-nonatomic-lo-hi.h

- cxl/hdm: Use 4-byte reads to retrieve HDM decoder base+limit

- cxl/hdm: Fail upon detecting 0-sized decoders

- Merge branch ‘for-6.3/cxl-doe-fixes’ into for-6.3/cxl

- cxl/hdm: Extend DVSEC range register emulation for region enumeration

- cxl/hdm: Limit emulation to the number of range registers

- cxl/region: Move coherence tracking into cxl_region_attach()

- cxl/region: Fix region setup/teardown for RCDs

- cxl/port: Fix find_cxl_root() for RCDs and simplify it

- cxl/hdm: Skip emulation when driver manages mem_enable

- cxl/hdm: Fix double allocation of @cxlhdm

- cxl/pci: Handle excessive CDAT length

- cxl/pci: Handle truncated CDAT entries

- cxl/pci: Handle truncated CDAT header

- driver core: bus: mark the struct bus_type for sysfs callbacks as constant

- cxl/pci: Fix CDAT retrieval on big endian

- Merge tag ‘cxl-for-6.3’ of git://git.kernel.org/pub/scm/linux/kernel/git/cxl/cxl

- Merge tag ‘driver-core-6.3-rc1’ of git://git.kernel.org/pub/scm/linux/kernel/git/gregkh/driver-core

- Merge branch ‘for-6.3/cxl-events’ into cxl/next

- cxl/mem: Add kdoc param for event log driver state

- cxl/trace: Add serial number to trace points

- cxl/trace: Add host output to trace points

- cxl/trace: Standardize device information output

- Merge branch ‘for-6.3/cxl-rr-emu’ into cxl/next

- cxl/pci: Remove locked check for dvsec_range_allowed()

- cxl/hdm: Add emulation when HDM decoders are not committed

- cxl/hdm: Create emulated cxl_hdm for devices that do not have HDM decoders

- cxl/hdm: Emulate HDM decoder from DVSEC range registers

- cxl/pci: Refactor cxl_hdm_decode_init()

- cxl/port: Export cxl_dvsec_rr_decode() to cxl_port

- cxl/pci: Break out range register decoding from cxl_hdm_decode_init()

- Merge branch ‘for-6.3/cxl’ into cxl/next

- Merge branch ‘for-6.3/cxl-ram-region’ into cxl/next

- cxl: add RAS status unmasking for CXL

- cxl: remove unnecessary calling of pci_enable_pcie_error_reporting()

- cxl: avoid returning uninitialized error code

- cxl/pmem: Fix nvdimm registration races

- Merge branch ‘for-6.3/cxl-ram-region’ into cxl/next

- Merge branch ‘for-6.3/cxl’ into cxl/next

- cxl/uapi: Tag commands from cxl_query_cmd()

- cxl/mem: Remove unused CXL_CMD_FLAG_NONE define

- cxl/dax: Create dax devices for CXL RAM regions

- tools/testing/cxl: Define a fixed volatile configuration to parse

- cxl/region: Add region autodiscovery

- cxl/port: Split endpoint and switch port probe

- cxl/region: Enable CONFIG_CXL_REGION to be toggled

- kernel/range: Uplevel the cxl subsystem’s range_contains() helper

- cxl/region: Move region-position validation to a helper

- cxl/region: Cleanup target list on attach error

- cxl/region: Refactor attach_target() for autodiscovery

- cxl/region: Add volatile region creation support

- cxl/region: Validate region mode vs decoder mode

- cxl/region: Support empty uuids for non-pmem regions

- cxl/region: Add a mode attribute for regions

- cxl/memdev: Fix endpoint port removal

- cxl/mem: Correct full ID range allocation

- Merge branch ‘for-6.3/cxl-events’ into cxl/next

- Merge branch ‘for-6.3/cxl’ into cxl/next

- cxl/region: Fix passthrough-decoder detection

- cxl/region: Fix null pointer dereference for resetting decoder

- cxl/pci: Fix irq oneshot expectations

- cxl/pci: Set the device timestamp

- cxl/mbox: Add missing parameter to docs.

- cxl/mbox: Fix Payload Length check for Get Log command

- driver core: make struct bus_type.uevent() take a const *

- driver core: make struct device_type.devnode() take a const *

- cxl/mem: Trace Memory Module Event Record

- cxl/mem: Trace DRAM Event Record

- cxl/mem: Trace General Media Event Record

- cxl/mem: Wire up event interrupts

- cxl: fix spelling mistakes

- cxl/mbox: Add debug messages for enabled mailbox commands

- cxl/mem: Read, trace, and clear events on driver load

- cxl/pmem: Fix nvdimm unregistration when cxl_pmem driver is absent

- cxl/port: Link the ‘parent_dport’ in portX/ and endpointX/ sysfs

- cxl/region: Clarify when a cxld->commit() callback is mandatory

- cxl/pci: Show opcode in debug messages when sending a command

- cxl: fix cxl_report_and_clear() RAS UE addr mis-assignment

- cxl/region: Only warn about cpu_cache_invalidate_memregion() once

- cxl/pci: Move tracepoint definitions to drivers/cxl/core/

- cxl/region: Fix memdev reuse check

- cxl/pci: Remove endian confusion

- cxl/pci: Add some type-safety to the AER trace points

- cxl/security: Drop security command ioctl uapi

- cxl/mbox: Add variable output size validation for internal commands

- cxl/mbox: Enable cxl_mbox_send_cmd() users to validate output size

- cxl/security: Fix Get Security State output payload endian handling

- cxl: update names for interleave ways conversion macros

- cxl: update names for interleave granularity conversion macros

- cxl/acpi: Warn about an invalid CHBCR in an existing CHBS entry

- cxl/acpi: Fail decoder add if CXIMS for HBIG is missing

- cxl/region: Fix spelling mistake “memergion” -> “memregion”

- cxl/regs: Fix sparse warning

- Merge branch ‘for-6.2/cxl-xor’ into for-6.2/cxl

- Merge branch ‘for-6.2/cxl-aer’ into for-6.2/cxl

- Merge branch ‘for-6.2/cxl-security’ into for-6.2/cxl

- cxl/port: Add RCD endpoint port enumeration

- cxl/mem: Move devm_cxl_add_endpoint() from cxl_core to cxl_mem

- cxl/acpi: Support CXL XOR Interleave Math (CXIMS)

- cxl/pci: Add callback to log AER correctable error

- cxl/pci: Add (hopeful) error handling support

- cxl/pci: add tracepoint events for CXL RAS

- cxl/pci: Find and map the RAS Capability Structure

- cxl/pci: Prepare for mapping RAS Capability Structure

- cxl/port: Limit the port driver to just the HDM Decoder Capability

- cxl/core/regs: Make cxl_map_{component, device}_regs() device generic

- cxl/pci: Kill cxl_map_regs()

- cxl/pci: Cleanup cxl_map_device_regs()

- cxl/pci: Cleanup repeated code in cxl_probe_regs() helpers

- cxl/acpi: Extract component registers of restricted hosts from RCRB

- cxl/region: Manage CPU caches relative to DPA invalidation events

- cxl/pmem: Enforce keyctl ABI for PMEM security

- cxl/region: Fix missing probe failure

- cxl: add dimm_id support for __nvdimm_create()

- cxl/ACPI: Register CXL host ports by bridge device

- tools/testing/cxl: Make mock CEDT parsing more robust

- cxl/acpi: Move rescan to the workqueue

- cxl/pmem: Remove the cxl_pmem_wq and related infrastructure

- cxl/pmem: Refactor nvdimm device registration, delete the workqueue

- cxl/region: Drop redundant pmem region release handling

- cxl/acpi: Simplify cxl_nvdimm_bridge probing

- cxl/pmem: add provider name to cxl pmem dimm attribute group

- cxl/pmem: add id attribute to CXL based nvdimm

- nvdimm/cxl/pmem: Add support for master passphrase disable security command

- cxl/pmem: Add “Passphrase Secure Erase” security command support

- cxl/pmem: Add “Unlock” security command support

- cxl/pmem: Add “Freeze Security State” security command support

- cxl/pmem: Add Disable Passphrase security command support

- cxl/pmem: Add “Set Passphrase” security command support

- cxl/pmem: Introduce nvdimm_security_ops with ->get_flags() operation

- cxl: Replace HDM decoder granularity magic numbers

- cxl/acpi: Improve debug messages in cxl_acpi_probe()

- cxl: Unify debug messages when calling devm_cxl_add_dport()

- cxl: Unify debug messages when calling devm_cxl_add_port()

- cxl/core: Check physical address before mapping it in devm_cxl_iomap_block()

- cxl/core: Remove duplicate declaration of devm_cxl_iomap_block()

- cxl/doe: Request exclusive DOE access

- cxl/region: Recycle region ids

- cxl/region: Fix ‘distance’ calculation with passthrough ports

- cxl/pmem: Fix cxl_pmem_region and cxl_memdev leak

- cxl/region: Fix cxl_region leak, cleanup targets at region delete

- cxl/region: Fix region HPA ordering validation

- cxl/pmem: Use size_add() against integer overflow

- cxl/region: Fix decoder allocation crash

- cxl/pmem: Fix failure to account for 8 byte header for writes to the device LSA.

- cxl/region: Fix null pointer dereference due to pass through decoder commit

- cxl/mbox: Add a check on input payload size

- cxl/hdm: Fix skip allocations vs multiple pmem allocations

- cxl/region: Disallow region granularity != window granularity

- cxl/region: Fix x1 interleave to greater than x1 interleave routing

- cxl/region: Move HPA setup to cxl_region_attach()

- cxl/region: Fix decoder interleave programming

- cxl/region: describe targets and nr_targets members of cxl_region_params

- cxl/regions: add padding for cxl_rr_ep_add nested lists

- cxl/region: Fix IS_ERR() vs NULL check

- cxl/region: Fix region reference target accounting

- cxl/region: Fix region commit uninitialized variable warning

- cxl/region: Fix port setup uninitialized variable warnings

- cxl/region: Stop initializing interleave granularity

- cxl/hdm: Fix DPA reservation vs cxl_endpoint_decoder lifetime

- cxl/acpi: Minimize granularity for x1 interleaves

- cxl/region: Delete ‘region’ attribute from root decoders

- cxl/acpi: Autoload driver for ‘cxl_acpi’ test devices

- cxl/region: decrement ->nr_targets on error in cxl_region_attach()

- cxl/region: prevent underflow in ways_to_cxl()

- cxl/region: uninitialized variable in alloc_hpa()

- cxl/region: Introduce cxl_pmem_region objects

- cxl/pmem: Fix offline_nvdimm_bus() to offline by bridge

- cxl/region: Add region driver boiler plate

- cxl/hdm: Commit decoder state to hardware

- cxl/region: Program target lists

- cxl/region: Attach endpoint decoders

- cxl/acpi: Add a host-bridge index lookup mechanism

- cxl/region: Enable the assignment of endpoint decoders to regions

- cxl/region: Allocate HPA capacity to regions

- cxl/region: Add interleave geometry attributes

- cxl/region: Add a ‘uuid’ attribute

- cxl/region: Add region creation support

- cxl/mem: Enumerate port targets before adding endpoints

- cxl/hdm: Add sysfs attributes for interleave ways + granularity

- cxl/port: Move dport tracking to an xarray

- cxl/port: Move ‘cxl_ep’ references to an xarray per port

- cxl/port: Record parent dport when adding ports

- cxl/port: Record dport in endpoint references

- cxl/hdm: Add support for allocating DPA to an endpoint decoder

- cxl/hdm: Track next decoder to allocate

- cxl/hdm: Add ‘mode’ attribute to decoder objects

- cxl/hdm: Enumerate allocated DPA

- cxl/core: Define a ‘struct cxl_endpoint_decoder’

- cxl/core: Define a ‘struct cxl_root_decoder’

- cxl/acpi: Track CXL resources in iomem_resource

- cxl/core: Define a ‘struct cxl_switch_decoder’

- cxl/port: Read CDAT table

- cxl/pci: Create PCI DOE mailbox’s for memory devices

- cxl/pmem: Delete unused nvdimm attribute

- cxl/hdm: Initialize decoder type for memory expander devices

- cxl/port: Cache CXL host bridge data

- tools/testing/cxl: Add partition support

- cxl/mem: Add a debugfs version of ‘iomem’ for DPA, ‘dpamem’

- cxl/debug: Move debugfs init to cxl_core_init()

- cxl/hdm: Require all decoders to be enumerated

- cxl/mem: Convert partition-info to resources

- cxl: Introduce cxl_to_{ways,granularity}

- cxl/core: Drop is_cxl_decoder()

- cxl/core: Drop ->platform_res attribute for root decoders

- cxl/core: Rename ->decoder_range ->hpa_range

- cxl/hdm: Use local hdm variable

- cxl/port: Keep port->uport valid for the entire life of a port

- cxl/mbox: Fix missing variable payload checks in cmd size validation

- cxl/mbox: Use __le32 in get,set_lsa mailbox structures

- cxl/core: Use is_endpoint_decoder

- cxl: Fix cleanup of port devices on failure to probe driver.

- cxl/port: Enable HDM Capability after validating DVSEC Ranges

- cxl/port: Reuse ‘struct cxl_hdm’ context for hdm init

- cxl/port: Move endpoint HDM Decoder Capability init to port driver

- cxl/pci: Drop @info argument to cxl_hdm_decode_init()

- cxl/mem: Merge cxl_dvsec_ranges() and cxl_hdm_decode_init()

- cxl/mem: Skip range enumeration if mem_enable clear

- cxl/mem: Consolidate CXL DVSEC Range enumeration in the core

- cxl/pci: Move cxl_await_media_ready() to the core

- cxl/mem: Validate port connectivity before dvsec ranges

- cxl/mem: Fix cxl_mem_probe() error exit

- cxl/pci: Drop wait_for_valid() from cxl_await_media_ready()

- cxl/pci: Consolidate wait_for_media() and wait_for_media_ready()

- cxl/mem: Drop mem_enabled check from wait_for_media()

- cxl: Drop cxl_device_lock()

- cxl/acpi: Add root device lockdep validation

- cxl: Replace lockdep_mutex with local lock classes

- cxl/mbox: fix logical vs bitwise typo

- cxl/mbox: Replace NULL check with IS_ERR() after vmemdup_user()

- cxl/mbox: Use type __u32 for mailbox payload sizes

- PM: CXL: Disable suspend

- cxl/mem: Replace redundant debug message with a comment

- cxl/mem: Rename cxl_dvsec_decode_init() to cxl_hdm_decode_init()

- cxl/pci: Make cxl_dvsec_ranges() failure not fatal to cxl_pci

- cxl/mem: Make cxl_dvsec_range() init failure fatal

- cxl/pci: Add debug for DVSEC range init failures

- cxl/mem: Drop DVSEC vs EFI Memory Map sanity check

- cxl/mbox: Use new return_code handling

- cxl/mbox: Improve handling of mbox_cmd hw return codes

- cxl/pci: Use CXL_MBOX_SUCCESS to check against mbox_cmd return code

- cxl/mbox: Drop mbox_mutex comment

- cxl/pmem: Remove CXL SET_PARTITION_INFO from exclusive_cmds list

- cxl/mbox: Block immediate mode in SET_PARTITION_INFO command

- cxl/mbox: Move cxl_mem_command param to a local variable

- cxl/mbox: Make handle_mailbox_cmd_from_user() use a mbox param

- cxl/mbox: Remove dependency on cxl_mem_command for a debug msg

- cxl/mbox: Construct a users cxl_mbox_cmd in the validation path

- cxl/mbox: Move build of user mailbox cmd to a helper functions

- cxl/mbox: Move raw command warning to raw command validation

- cxl/mbox: Move cxl_mem_command construction to helper funcs

- cxl/pci: Drop shadowed variable

- cxl/core/port: Fix NULL but dereferenced coccicheck error

- cxl/port: Hold port reference until decoder release

- cxl/port: Fix endpoint refcount leak

- cxl/core: Fix cxl_device_lock() class detection

- cxl/core/port: Fix unregister_port() lock assertion

- cxl/regs: Fix size of CXL Capability Header Register

- cxl/core/port: Handle invalid decoders

- cxl/core/port: Fix / relax decoder target enumeration

- cxl/core/port: Add endpoint decoders

- cxl/core: Move target_list out of base decoder attributes

- cxl/mem: Add the cxl_mem driver

- cxl/core/port: Add switch port enumeration

- cxl/memdev: Add numa_node attribute

- cxl/pci: Emit device serial number

- cxl/pci: Implement wait for media active

- cxl/pci: Retrieve CXL DVSEC memory info

- cxl/pci: Cache device DVSEC offset

- cxl/pci: Store component register base in cxlds

- cxl/core/port: Remove @host argument for dport + decoder enumeration

- cxl/port: Add a driver for ‘struct cxl_port’ objects

- cxl/core: Emit modalias for CXL devices

- cxl/core/hdm: Add CXL standard decoder enumeration to the core

- cxl/core: Generalize dport enumeration in the core

- cxl/pci: Rename pci.h to cxlpci.h

- cxl/port: Up-level cxl_add_dport() locking requirements to the caller

- cxl/pmem: Introduce a find_cxl_root() helper

- cxl/port: Introduce cxl_port_to_pci_bus()

- cxl/core/port: Use dedicated lock for decoder target list

- cxl: Prove CXL locking

- cxl/core: Track port depth

- cxl/core/port: Make passthrough decoder init implicit

- cxl/core: Fix cxl_probe_component_regs() error message

- cxl/core/port: Clarify decoder creation

- cxl/core: Convert decoder range to resource

- cxl/decoder: Hide physical address information from non-root

- cxl/core/port: Rename bus.c to port.c

- cxl: Introduce module_cxl_driver

- cxl/acpi: Map component registers for Root Ports

- cxl/pci: Add new DVSEC definitions

- cxl: Flesh out register names

- cxl/pci: Defer mailbox status checks to command timeouts

- cxl/pci: Implement Interface Ready Timeout

- cxl: Rename CXL_MEM to CXL_PCI

- cxl/core: Remove cxld_const_init in cxl_decoder_alloc()

- cxl/pmem: Fix module reload vs workqueue state

- ACPI: NUMA: Add a node and memblk for each CFMWS not in SRAT

- cxl/test: Mock acpi_table_parse_cedt()

- cxl/acpi: Convert CFMWS parsing to ACPI sub-table helpers

- cxl/memdev: Remove unused cxlmd field

- cxl/core: Convert to EXPORT_SYMBOL_NS_GPL

- cxl/memdev: Change cxl_mem to a more descriptive name

- cxl/mbox: Remove bad comment

- cxl/pmem: Fix reference counting for delayed work

- Merge tag ‘cxl-for-5.16’ of git://git.kernel.org/pub/scm/linux/kernel/git/cxl/cxl

- cxl/pci: Use pci core’s DVSEC functionality

- cxl/pci: Split cxl_pci_setup_regs()

- cxl/pci: Add @base to cxl_register_map

- cxl/pci: Make more use of cxl_register_map

- cxl/pci: Remove pci request/release regions

- cxl/pci: Fix NULL vs ERR_PTR confusion

- cxl/pci: Remove dev_dbg for unknown register blocks

- cxl/pci: Convert register block identifiers to an enum

- cxl/acpi: Do not fail cxl_acpi_probe() based on a missing CHBS

- cxl/core: Replace unions with struct_group()

- cxl/pci: Disambiguate cxl_pci further from cxl_mem

- cxl/core: Split decoder setup into alloc + add

- tools/testing/cxl: Introduce a mock memory device + driver

- cxl/mbox: Move command definitions to common location

- cxl/bus: Populate the target list at decoder create

- tools/testing/cxl: Introduce a mocked-up CXL port hierarchy

- cxl/pmem: Add support for multiple nvdimm-bridge objects

- cxl/pmem: Translate NVDIMM label commands to CXL label commands

- cxl/mbox: Add exclusive kernel command support

- cxl/mbox: Convert ’enabled_cmds’ to DECLARE_BITMAP

- cxl/pci: Use module_pci_driver

- cxl/mbox: Move mailbox and other non-PCI specific infrastructure to the core

- cxl/pci: Drop idr.h

- cxl/mbox: Introduce the mbox_send operation

- cxl/pci: Clean up cxl_mem_get_partition_info()

- cxl/pci: Make ‘struct cxl_mem’ device type generic

- Merge tag ‘cxl-for-5.15’ of git://git.kernel.org/pub/scm/linux/kernel/git/cxl/cxl

- cxl/registers: Fix Documentation warning

- cxl/pmem: Fix Documentation warning

- cxl/pci: Fix debug message in cxl_probe_regs()

- cxl/pci: Fix lockdown level

- cxl/acpi: Do not add DSDT disabled ACPI0016 host bridge ports

- cxl/mem: Adjust ram/pmem range to represent DPA ranges

- cxl/mem: Account for partitionable space in ram/pmem ranges

- cxl/pci: Store memory capacity values

- cxl/pci: Simplify register setup

- cxl/pci: Ignore unknown register block types

- cxl/core: Move memdev management to core

- cxl/pci: Introduce cdevm_file_operations

- cxl/core: Move register mapping infrastructure

- cxl/core: Move pmem functionality

- cxl/core: Improve CXL core kernel docs

- cxl: Move cxl_core to new directory

- bus: Make remove callback return void

- cxl/pci: Rename CXL REGLOC ID

- cxl/acpi: Use the ACPI CFMWS to create static decoder objects

- cxl/acpi: Add the Host Bridge base address to CXL port objects

- cxl/pmem: Register ‘pmem’ / cxl_nvdimm devices

- cxl/pmem: Add initial infrastructure for pmem support

- cxl/core: Add cxl-bus driver infrastructure

- cxl/pci: Add media provisioning required commands

- cxl/component_regs: Fix offset

- cxl/hdm: Fix decoder count calculation

- cxl/acpi: Introduce cxl_decoder objects

- cxl/acpi: Enumerate host bridge root ports

- cxl/acpi: Add downstream port data to cxl_port instances

- cxl/Kconfig: Default drivers to CONFIG_CXL_BUS

- cxl/acpi: Introduce the root of a cxl_port topology

- cxl/pci: Fixup devm_cxl_iomap_block() to take a ‘struct device *’

- cxl/pci: Add HDM decoder capabilities

- cxl/pci: Reserve individual register block regions

- cxl/pci: Map registers based on capabilities

- cxl/pci: Reserve all device regions at once

- cxl/pci: Introduce cxl_decode_register_block()

- cxl/mem: Get rid of @cxlm.base

- cxl/mem: Move register locator logic into reg setup

- cxl/mem: Split creation from mapping in probe

- cxl/mem: Use dev instead of pdev->dev

- cxl/mem: Demarcate vendor specific capability IDs

- cxl/pci.c: Add a ’label_storage_size’ attribute to the memdev

- cxl: Rename mem to pci

- cxl/core: Refactor CXL register lookup for bridge reuse

- cxl/core: Rename bus.c to core.c

- cxl/mem: Introduce ‘struct cxl_regs’ for “composable” CXL devices

- cxl/mem: Move some definitions to mem.h

- cxl/mem: Fix memory device capacity probing

- cxl/mem: Fix register block offset calculation

- cxl/mem: Force array size of mem_commands[] to CXL_MEM_COMMAND_ID_MAX

- cxl/mem: Disable cxl device power management

- cxl/mem: Do not rely on device_add() side effects for dev_set_name() failures

- cxl/mem: Fix synchronization mechanism for device removal vs ioctl operations

- cxl/mem: Use sysfs_emit() for attribute show routines

- cxl/mem: Fix potential memory leak

- cxl/mem: Return -EFAULT if copy_to_user() fails

- cxl/mem: Add set of informational commands

- cxl/mem: Enable commands via CEL

- cxl/mem: Add a “RAW” send command

- cxl/mem: Add basic IOCTL interface

- cxl/mem: Register CXL memX devices

- cxl/mem: Find device capabilities

- cxl/mem: Introduce a driver for CXL-2.0-Type-3 endpoints

- module: Convert symbol namespace to string literal

- Merge tag ’libnvdimm-for-6.13’ of git://git.kernel.org/pub/scm/linux/kernel/git/nvdimm/nvdimm

- dax: delete a stale directory pmem

- dax: Document struct dev_dax_range

- device-dax: correct pgoff align in dax_set_mapping()

- mm: make range-to-target_node lookup facility a part of numa_memblks

- mm/dax: dump start address in fault handler

- Merge tag ‘driver-core-6.11-rc1’ of git://git.kernel.org/pub/scm/linux/kernel/git/gregkh/driver-core

- driver core: have match() callback in struct bus_type take a const *

- dax: add missing MODULE_DESCRIPTION() macros

- Merge tag ‘mm-stable-2024-05-17-19-19’ of git://git.kernel.org/pub/scm/linux/kernel/git/akpm/mm

- Merge tag ’libnvdimm-for-6.10’ of git://git.kernel.org/pub/scm/linux/kernel/git/nvdimm/nvdimm

- dax/bus.c: use the right locking mode (read vs write) in size_show

- dax/bus.c: don’t use down_write_killable for non-user processes

- dax/bus.c: fix locking for unregister_dax_dev / unregister_dax_mapping paths

- dax/bus.c: replace WARN_ON_ONCE() with lockdep asserts

- memory tier: dax/kmem: introduce an abstract layer for finding, allocating, and putting memory types

- mm: switch mm->get_unmapped_area() to a flag

- dax: remove redundant assignment to variable rc

- dax: constify the struct device_type usage

- fs: claw back a few FMODE_* bits

- Merge tag ’libnvdimm-for-6.9’ of git://git.kernel.org/pub/scm/linux/kernel/git/nvdimm/nvdimm

- Merge tag ‘mm-stable-2024-03-13-20-04’ of git://git.kernel.org/pub/scm/linux/kernel/git/akpm/mm

- mm, slab: remove last vestiges of SLAB_MEM_SPREAD

- dax: remove SLAB_MEM_SPREAD flag usage

- dax: fix incorrect list of data cache aliasing architectures

- dax: check for data cache aliasing at runtime

- dax: alloc_dax() return ERR_PTR(-EOPNOTSUPP) for CONFIG_DAX=n

- dax: add a sysfs knob to control memmap_on_memory behavior

- dax/bus.c: replace several sprintf() with sysfs_emit()

- dax/bus.c: replace driver-core lock usage by a local rwsem

- device-dax: make dax_bus_type const

- Merge tag ‘xfs-6.8-merge-3’ of git://git.kernel.org/pub/scm/fs/xfs/xfs-linux

- dax/kmem: allow kmem to add memory with memmap_on_memory

- mm, pmem, xfs: Introduce MF_MEM_PRE_REMOVE for unbind

- Merge tag ‘mm-stable-2023-11-01-14-33’ of git://git.kernel.org/pub/scm/linux/kernel/git/akpm/mm

- dax, kmem: calculate abstract distance with general interface

- dax: refactor deprecated strncpy

- mm: remove enum page_entry_size

- memory tier: rename destroy_memory_type() to put_memory_type()

- dax: enable dax fault handler to report VM_FAULT_HWPOISON

- dax/kmem: Pass valid argument to memory_group_register_static

- dax: Cleanup extra dax_region references

- dax: Introduce alloc_dev_dax_id()

- dax: Use device_unregister() in unregister_dax_mapping()

- dax: Fix dax_mapping_release() use after free

- dax: fix missing-prototype warnings

- Merge tag ‘cxl-for-6.3’ of git://git.kernel.org/pub/scm/linux/kernel/git/cxl/cxl

- Merge tag ‘driver-core-6.3-rc1’ of git://git.kernel.org/pub/scm/linux/kernel/git/gregkh/driver-core

- Merge tag ‘mm-stable-2023-02-20-13-37’ of git://git.kernel.org/pub/scm/linux/kernel/git/akpm/mm

- Merge tag ‘rcu.2023.02.10a’ of git://git.kernel.org/pub/scm/linux/kernel/git/paulmck/linux-rcu

- dax/kmem: Fix leak of memory-hotplug resources

- dax: cxl: add CXL_REGION dependency

- cxl/dax: Create dax devices for CXL RAM regions

- dax: Assign RAM regions to memory-hotplug by default

- dax/hmem: Move hmem device registration to dax_hmem.ko

- dax/hmem: Convey the dax range via memregion_info()

- dax/hmem: Drop unnecessary dax_hmem_remove()

- dax/hmem: Move HMAT and Soft reservation probe initcall level

- mm: replace vma->vm_flags direct modifications with modifier calls

- drivers/dax: Remove “select SRCU”

- driver core: make struct bus_type.uevent() take a const *

- dax: super.c: fix kernel-doc bad line warning

- device-dax: Fix duplicate ‘hmem’ device registration

- Merge tag ’libnvdimm-for-6.1’ of git://git.kernel.org/pub/scm/linux/kernel/git/nvdimm/nvdimm

- Merge tag ‘mm-stable-2022-10-08’ of git://git.kernel.org/pub/scm/linux/kernel/git/akpm/mm

- ACPI: HMAT: Release platform device in case of platform_device_add_data() fails

- dax: Remove usage of the deprecated ida_simple_xxx API

- mm/demotion/dax/kmem: set node’s abstract distance to MEMTIER_DEFAULT_DAX_ADISTANCE

- devdax: Fix soft-reservation memory description

- dax: introduce holder for dax_device

- dax: add .recovery_write dax_operation

- dax: introduce DAX_RECOVERY_WRITE dax access mode

- Merge tag ‘dax-for-5.18’ of git://git.kernel.org/pub/scm/linux/kernel/git/nvdimm/nvdimm

- Merge tag ‘folio-5.18b’ of git://git.infradead.org/users/willy/pagecache

- fs: allocate inode by using alloc_inode_sb()

- fs: Convert __set_page_dirty_no_writeback to noop_dirty_folio

- fs: Remove noop_invalidatepage()

- dax: Fix missing kdoc for dax_device

- dax: make sure inodes are flushed before destroy cache

- Merge branch ‘akpm’ (patches from Andrew)

- device-dax: compound devmap support

- device-dax: remove pfn from _dev_dax{pte,pmd,pud}_fault()

- device-dax: set mapping prior to vmf_insert_pfn{,_pmd,pud}()

- device-dax: factor out page mapping initialization

- device-dax: ensure dev_dax->pgmap is valid for dynamic devices

- device-dax: use struct_size()

- device-dax: use ALIGN() for determining pgoff

- dax: remove the copy_from_iter and copy_to_iter methods

- dax: remove the DAXDEV_F_SYNC flag

- dax: simplify dax_synchronous and set_dax_synchronous

- dax: return the partition offset from fs_dax_get_by_bdev

- fsdax: simplify the pgoff calculation

- dax: remove dax_capable

- dax: move the partition alignment check into fs_dax_get_by_bdev

- dax: remove the pgmap sanity checks in generic_fsdax_supported

- dax: simplify the dax_device <-> gendisk association

- dax: remove CONFIG_DAX_DRIVER

- dm: make the DAX support depend on CONFIG_FS_DAX

- dax: Kill DEV_DAX_PMEM_COMPAT

- nvdimm/pmem: move dax_attribute_group from dax to pmem

- Merge tag ’libnvdimm-for-5.15’ of git://git.kernel.org/pub/scm/linux/kernel/git/nvdimm/nvdimm

- Merge branch ‘akpm’ (patches from Andrew)

- dax/kmem: use a single static memory group for a single probed unit

- mm/memory_hotplug: remove nid parameter from remove_memory() and friends

- Merge tag ‘driver-core-5.15-rc1’ of git://git.kernel.org/pub/scm/linux/kernel/git/gregkh/driver-core

- dax: remove bdev_dax_supported

- dax: stub out dax_supported for !CONFIG_FS_DAX

- dax: remove __generic_fsdax_supported

- dax: move the dax_read_lock() locking into dax_supported

- dax: mark dax_get_by_host static

- dax: stop using bdevname

- Merge branch ‘for-5.14/dax’ into libnvdimm-fixes

- bus: Make remove callback return void

- dax: Ensure errno is returned from dax_direct_access

- fs: remove noop_set_page_dirty()

- dax: avoid -Wempty-body warnings

- Merge branch ‘work.misc’ of git://git.kernel.org/pub/scm/linux/kernel/git/viro/vfs

- Merge branch ‘for-5.12/dax’ into for-5.12/libnvdimm

- whack-a-mole: don’t open-code iminor/imajor

- dax-device: Make remove callback return void

- device-dax: Drop an empty .remove callback

- device-dax: Fix error path in dax_driver_register

- device-dax: Properly handle drivers without remove callback

- device-dax: Prevent registering drivers without probe callback

- libnvdimm: Make remove callback return void

- device-dax: Fix default return code of range_parse()

- Merge tag ’libnvdimm-for-5.11’ of git://git.kernel.org/pub/scm/linux/kernel/git/nvdimm/nvdimm

- device-dax: Avoid an unnecessary check in alloc_dev_dax_range()

- device-dax: Fix range release

- device-dax: delete a redundancy check in dev_dax_validate_align()

- device-dax/core: Fix memory leak when rmmod dax.ko

- device-dax/pmem: Convert comma to semicolon

- vm_ops: rename .split() callback to .may_split()

- device-dax/kmem: use struct_size()

- mm: fix phys_to_target_node() and memory_add_physaddr_to_nid() exports

- Merge tag ‘fuse-update-5.10’ of git://git.kernel.org/pub/scm/linux/kernel/git/mszeredi/fuse

- mm/memory_hotplug: prepare passing flags to add_memory() and friends

- device-dax/kmem: fix resource release

- device-dax: add a range mapping allocation attribute

- dax/hmem: introduce dax_hmem.region_idle parameter

- device-dax: add an ‘align’ attribute

- device-dax: make align a per-device property

- device-dax: introduce ‘mapping’ devices

- device-dax: add dis-contiguous resource support

- mm/memremap_pages: support multiple ranges per invocation

- mm/memremap_pages: convert to ‘struct range’

- device-dax: add resize support

- device-dax: introduce ‘seed’ devices

- device-dax: introduce ‘struct dev_dax’ typed-driver operations

- device-dax: add an allocation interface for device-dax instances

- device-dax/kmem: replace release_resource() with release_mem_region()

- device-dax/kmem: move resource name tracking to drvdata

- device-dax/kmem: introduce dax_kmem_range()

- device-dax: make pgmap optional for instance creation

- device-dax: move instance creation parameters to ‘struct dev_dax_data’

- device-dax: drop the dax_region.pfn_flags attribute

- ACPI: HMAT: attach a device for each soft-reserved range

- ACPI: HMAT: refactor hmat_register_target_device to hmem_register_device

- dax: Fix stack overflow when mounting fsdax pmem device

- dm: Call proper helper to determine dax support

- Merge tag ’libnvdimm-fix-v5.9-rc5’ of git://git.kernel.org/pub/scm/linux/kernel/git/nvdimm/nvdimm

- dax: Modify bdev_dax_pgoff() to handle NULL bdev

- Merge tag ‘for-linus-5.9-rc4-tag’ of git://git.kernel.org/pub/scm/linux/kernel/git/xen/tip

- memremap: rename MEMORY_DEVICE_DEVDAX to MEMORY_DEVICE_GENERIC

- dax: fix detection of dax support for non-persistent memory block devices

- dax: do not print error message for non-persistent memory block device

- Merge tag ’libnvdimm-for-5.9’ of git://git.kernel.org/pub/scm/linux/kernel/git/nvdimm/nvdimm

- drivers/dax: Expand lock scope to cover the use of addresses

- dax: print error message by pr_info() in __generic_fsdax_supported()

- block: remove the bd_queue field from struct block_device

- device-dax: add memory via add_memory_driver_managed()

- vfs: track per-sb writeback errors and report them to syncfs

- device-dax: don’t leak kernel memory to user space after unloading kmem

- dax: Move mandatory ->zero_page_range() check in alloc_dax()

- dax, pmem: Add a dax operation zero_page_range

- dax: Get rid of fs_dax_get_by_host() helper

- Merge tag ’libnvdimm-for-5.5’ of git://git.kernel.org/pub/scm/linux/kernel/git/nvdimm/nvdimm

- dax: Add numa_node to the default device-dax attributes

- dax: Simplify root read-only definition for the ‘resource’ attribute

- dax: Create a dax device_type

- libnvdimm/namespace: Differentiate between probe mapping and runtime mapping

- device-dax: Add a driver for “hmem” devices

- dax: Fix alloc_dax_region() compile warning

- Merge branch ‘work.mount0’ of git://git.kernel.org/pub/scm/linux/kernel/git/viro/vfs

- Merge tag ‘dax-for-5.3’ of git://git.kernel.org/pub/scm/linux/kernel/git/nvdimm/nvdimm

- Merge tag ’libnvdimm-for-5.3’ of git://git.kernel.org/pub/scm/linux/kernel/git/nvdimm/nvdimm

- device-dax: “Hotremove” persistent memory that is used like normal RAM

- device-dax: fix memory and resource leak if hotplug fails

- libnvdimm: add dax_dev sync flag

- device-dax: use the dev_pagemap internal refcount

- memremap: pass a struct dev_pagemap to ->kill and ->cleanup

- memremap: move dev_pagemap callbacks into a separate structure

- memremap: validate the pagemap type passed to devm_memremap_pages

- device-dax: Add a ‘resource’ attribute

- mm/devm_memremap_pages: fix final page put race

- treewide: Replace GPLv2 boilerplate/reference with SPDX - rule 295

- vfs: Convert dax to use the new mount API

- mount_pseudo(): drop ’name’ argument, switch to d_make_root()

- Merge tag ’libnvdimm-fixes-5.2-rc2’ of git://git.kernel.org/pub/scm/linux/kernel/git/nvdimm/nvdimm

- treewide: Add SPDX license identifier - Makefile/Kconfig

- device-dax: Drop register_filesystem()

- dax: Arrange for dax_supported check to span multiple devices

- Merge tag ’libnvdimm-fixes-5.2-rc1’ of git://git.kernel.org/pub/scm/linux/kernel/git/nvdimm/nvdimm

- mm/huge_memory: fix vmf_insert_pfn_{pmd, pud}() crash, handle unaligned addresses

- drivers/dax: Allow to include DEV_DAX_PMEM as builtin

- dax: make use of ->free_inode()

- dax/pmem: Fix whitespace in dax_pmem

- Merge tag ‘devdax-for-5.1’ of git://git.kernel.org/pub/scm/linux/kernel/git/nvdimm/nvdimm

- device-dax: “Hotplug” persistent memory for use like normal RAM

- device-dax: Add a ‘modalias’ attribute to DAX ‘bus’ devices

- dax: Check the end of the block-device capacity with dax_direct_access()

- device-dax: Add a ’target_node’ attribute

- device-dax: Auto-bind device after successful new_id

- acpi/nfit, device-dax: Identify differentiated memory with a unique numa-node

- device-dax: Add /sys/class/dax backwards compatibility

- device-dax: Add support for a dax override driver