Blog Posts

vLLM Recipe: RedHatAI/Qwen3.6-35B-A3B-NVFP4 on DGX Spark

This is a vLLM Recipe - a production-ready Docker Compose configuration for running open-weight models on local hardware. It documents the exact setup, configuration rationale, and benchmark results so you can get a model running quickly. You are welcome to change the parameters to suit your workloads. This worked for me, so I hope you find it helpful.

This recipe covers Qwen3.6-35B-A3B-NVFP4 - a Mixture-of-Experts model with 35B total parameters but only ~3B active at inference - quantized to NVFP4 by Red Hat AI and running on the NVIDIA DGX Spark (my GigaByte AI Top Atom) with a GB10 Blackwell GPU and 128 GB of unified CPU/GPU memory.

Read More

Self-Hosting Firecrawl on Ubuntu 25.04 with Docker Compose

Modern AI agents — Claude Code, Codex, OpenClaw, Hermes-Agent, and custom LangChain pipelines — need a way to read the web. Not raw HTML full of navigation debris, cookie banners, and JavaScript noise, but clean structured text that a language model can actually reason about. Firecrawl is the missing piece: an open-source web scraping and crawling API that fetches any URL and returns clean Markdown, ready to drop straight into a context window or a RAG pipeline.

Read MoreBuilding an Agentic Team for an Open Source Project with Claude Code

A core engineer on MemMachine — the one who owned the Semantic Memory subsystem — left the project. The codebase didn’t grow any less complex overnight, but the human attention available to maintain it did. That’s a familiar shape of problem in any open source project, and it’s the exact shape where a well-designed Claude Code agent team earns its keep.

This post documents what I built: a 22-agent maintenance team that lives entirely inside MemMachine’s repository, coordinates via Claude Code’s experimental Agent Teams runtime, and operates under a design I can reproduce for any existing repository with real code. The agents don’t push code, don’t sign commits, don’t merge pull requests, and don’t cut releases — humans still gatekeep every consequential action. What the agents do do is the tedious and error-prone middle of software maintenance: triage, spec drafting, implementation, QA, security review, docs, dependency and upstream tracking.

Read More

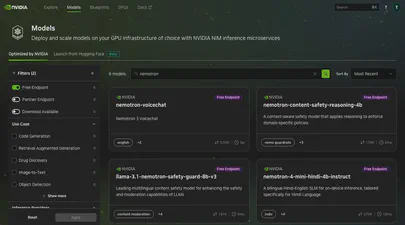

Using the API to Find Free Hosted Models on NVIDIA Builder

The NVIDIA Developer Program provides access to a wide catalog of AI models through NVIDIA Inference Microservices (NIM), offering an OpenAI-compatible API. You can browse and discover available models at build.nvidia.com/explore/discover .

If you want to find models with free hosted endpoints in the browser, you can enable the “Free Endpoint” filter

on the model catalog page. But what if you need that information programmatically – in a script, a CI pipeline, or as part of an automated workflow? The browser filter is not accessible through the API, and the /v1/models endpoint does not distinguish between free hosted models and everything else.

Reflections and What's Next: Lessons from Building lib3mf-rs

Series: Building lib3mf-rs

This post is part of a 5-part series on building a comprehensive 3MF library in Rust:

- Part 1: My Journey Building a 3MF Native Rust Library from Scratch

- Part 2: The Library Landscape - Why Build Another One?

- Part 3: Into the 3MF Specification Wilderness - Reading 1000+ Pages of Specifications

- Part 4: Design for Developers - Features, Flags, and the CLI

- Part 5: Reflections and What’s Next - Lessons from Building lib3mf-rs

On February 4th, 2026, lib3mf-rs is a published open-source project with complete specification coverage, available for anyone to use.

Read More

Design for Developers: Features, Flags, and the CLI

Series: Building lib3mf-rs

This post is part of a 5-part series on building a comprehensive 3MF library in Rust:

- Part 1: My Journey Building a 3MF Native Rust Library from Scratch

- Part 2: The Library Landscape - Why Build Another One?

- Part 3: Into the 3MF Specification Wilderness - Reading 1000+ Pages of Specifications

- Part 4: Design for Developers - Features, Flags, and the CLI

- Part 5: Reflections and What’s Next - Lessons from Building lib3mf-rs

Understanding the specifications was one thing. Designing a library that developers would actually want to use? That was another challenge entirely. I’ve worked on many libraries over the years, and I’ve learned a lot about what makes a good library, for example the Persistent Memory Development Kit . The difference is understanding how Rust does things.

Read More

Into the 3MF Specification Wilderness: Reading 1000+ Pages of Specifications

Series: Building lib3mf-rs

This post is part of a 5-part series on building a comprehensive 3MF library in Rust:

- Part 1: My Journey Building a 3MF Native Rust Library from Scratch

- Part 2: The Library Landscape - Why Build Another One?

- Part 3: Into the 3MF Specification Wilderness - Reading 1000+ Pages of Specifications

- Part 4: Design for Developers - Features, Flags, and the CLI

- Part 5: Reflections and What’s Next - Lessons from Building lib3mf-rs

“How hard can it be? It’s just a file format.”

That’s what I thought before I started reading the 3MF specifications. After reading, re-reading, and getting AI to help summarize and dig deeper into the interpretations and understandings, I was ready to begin.

Read More

The Library Landscape: Why Build Another One?

Series: Building lib3mf-rs

This post is part of a 5-part series on building a comprehensive 3MF library in Rust:

- Part 1: My Journey Building a 3MF Native Rust Library from Scratch

- Part 2: The Library Landscape - Why Build Another One?

- Part 3: Into the 3MF Specification Wilderness - Reading 1000+ Pages of Specifications

- Part 4: Design for Developers - Features, Flags, and the CLI

- Part 5: Reflections and What’s Next - Lessons from Building lib3mf-rs

“Why not just use the existing library?”

It’s a fair question. One I asked myself many times during the early days of this project. The 3MF Consortium maintains lib3MF , a comprehensive C++ implementation used by major companies in additive manufacturing. Why build another one?

Read MoreCategories

- 3D Printing ( 7 )

- AI ( 7 )

- Books ( 2 )

- Cloud Computing ( 1 )

- Conferences ( 2 )

- CXL ( 15 )

- Data Center ( 2 )

- Development ( 2 )

- Events ( 2 )

- Hardware ( 1 )

- How To ( 35 )

- Linux ( 31 )

- Machine Learning ( 1 )

- OrcaSlicer ( 2 )

- Performance ( 2 )

- Persistent Memory ( 1 )

- PMEM ( 1 )

- Product Manager ( 1 )

- Projects ( 3 )

- Servers ( 1 )

- Storage ( 1 )

- System Administration ( 2 )

- Troubleshooting ( 4 )

- Ubuntu ( 1 )

- Vector Databases ( 1 )

Tags

- 3D Printing

- 3MF

- ACPI

- ACPI-CA

- Acpidump

- Active-Memory

- Agent

- Agent Skills

- Agent Teams

- AI

- AI Agents

- AI Engineering

- AMD

- API

- Apple Silicon

- Arcade

- Artificial Intelligence

- AWS EC2

- Bash

- Benchmark

- Blackwell

- Blister Pack

- Book

- Boot

- Bootable-Usb

- Build From Source

- Buyer's Guide

- C

- C-2

- Chat Completions

- Chat GPT

- ChatGPT

- Claude Code

- Clflushopt

- Cloud

- CMake

- Code Tunnel

- Code-Server

- Codespaces

- Codex

- Compute Express Link

- Cpu

- Crawling

- Custom GPT

- Custom-Kernel

- CXL

- CXL 1.0

- CXL 1.1

- CXL 2.0

- CXL 3.0

- CXL Devices

- CXL Specification

- Data Center

- DAX

- Daxctl

- Debugging

- Dell

- Development

- Device-Mapper

- DGX Spark

- Dm-Writecache

- Docker

- Docker Compose

- DRAM

- Edge

- Enfabrica

- Esxi

- Fastfetch

- Featured

- Fedora

- Firecrawl

- Firmware

- Free AI Models

- Frequency

- FSDAX

- G-Code

- GB10

- Generative Prompt Engineering

- Git

- Governor

- Gpg

- GPT

- Gpt-3

- Gpt-4

- GPU

- Grafana

- H3 Platform

- Hermes-Agent

- Home Lab

- HPE

- Iasl

- Intel

- Ipmctl

- Java

- Kernel

- Kvm

- LangChain

- Lenovo

- Linux

- Linux Kernel

- Linux-Volume-Manager

- LLM

- Local LLM

- Lvm

- Machine Learning

- MacOS

- Mainline

- MAME

- MemMachine

- Memory

- Memory Management

- Memory Mapping

- Memory-Tiering

- Micron

- Microsoft

- ML

- Mmap

- MoE

- Movdir64b

- MTP

- Mysql

- Napkin Math

- NDCTL

- Neofetch

- NIM

- NUMA

- Nvdimm

- NVFP4

- NVIDIA

- NVIDIA Builder

- NVIDIA Developer Program

- Ollama

- Open Source

- Open Source Maintenance

- Open WebUI

- OpenClaw

- Optane

- OrcaSlicer

- Pagemap

- PCIe

- Percona

- Performance

- Performance Tuning

- Persistent Memory

- Personal Branding

- Physical Address

- Physical Memory

- Pmdk

- PMem

- Powersave

- Procfs

- Product Manager

- Programming

- Prometheus

- Prompt Engineering

- Python

- Qdrant

- QEMU

- Qwen3

- Qwen3.6

- RAG

- RedHatAI

- Remote Development

- Retimers

- Retrieval Augmented Generation

- Rust

- Samsung

- Self-Hosting

- Server

- Servers

- SNC

- Spec-Driven Development

- Speculative Decoding

- SSH

- STREAM Benchmark

- Sub-NUMA Cluster

- Sub-NUMA Clustering

- Subagents

- Supermicro

- Switches

- Sysadmin

- Sysfs

- System Administration

- System Information

- System-Ram

- Technical Documentation

- Terminal

- Tiered-Memory

- Travel Moves

- Tutorial

- Ubuntu

- Ubuntu 22.04

- Ubuntu 25.04

- Vector Databases

- Virtual Memory

- VLLM

- Vmware

- Vmware-Esxi

- Vpmem

- VS Code

- Vsphere

- Web Scraping

- Website

- Window

- Windows

- Windows-Server

- Working-Set-Size

- Wss

- Xcode