AI

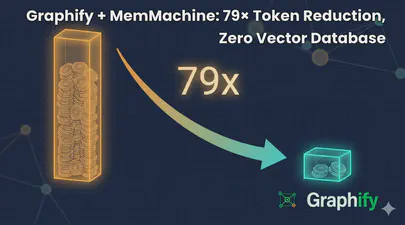

Graphify + MemMachine: 79× Token Reduction, Zero Vector Database

I help maintain MemMachine — an open-source long-term memory layer for AI agents. It’s a real codebase: 442 source files, 171 docs, a graph database, a SQL store, an MCP server, a REST API, a Python SDK, and integrations with eight different agent frameworks. When a new contributor asks “where does episodic memory actually get written?”, grep, the tool of choice for many AI coding assistants, doesn’t cut it. The answer threads through five files in three folders, plus a docker-compose service definition and a Helm chart. Each question you ask, it has to search all of these files, using the LLM to semantically understand the question and the files, then piece together an answer. This can take a lot of tokens and consume much of the context window.

Read More

Is Thinking Mode Affecting Your Agentic Workflows?

I jumped on the trend of running local LLMs and agents and was having a lot of fun until my agents kept failing, timing out, and just stopping without any obvious reason. I tried PaperClip + ZeroClaw, PaperClip + Hermes-Agent, and Hermes-Agent + Hermes-Workspace with Qwen 3.6 and Gemma 4 models (various sizes and quantization levels). All of them failed in the same way at some point in the workflow with almost nothing reported in the logs to indicate what was happening. Some tasks completed without any problem, but most did not, often leaving me to wonder what was going on. After many hours of debugging and reading many forums, I finally found that this was a model serving configuration trap that catches many people the first time they self-host a reasoning model.

Read More

How To Run ZeroClaw in Docker with local LLMs (Qwen3 on an NVIDIA DGX Spark)

ZeroClaw is an open-source agent runtime. By default it expects a frontier model API key such as Claude, OpenAI, etc. This guide shows how to use a local Qwen3.6 model served by vLLM on an NVIDIA DGX Spark, routed through LiteLLM, with ZeroClaw and Firecrawl running in Docker on a separate host.

It also documents the onboarding bug I hit on a fresh install in v0.7.4 — ZeroClaw issue #6123 — and the config-only workaround.

Read More

Run Free LLMs at Scale: LiteLLM Gateway with Groq, NVIDIA NIM, OpenRouter, and Local vLLM

Introduction

Running large language models is increasingly affordable — but “affordable” rarely means “free, all the time, for every request.” Cloud providers each come with their own rate limits, daily quotas, and occasional model deprecations. Local hardware is fast and private, but not always available (DGX Spark powered down, model being updated, VRAM needed elsewhere). Somewhere between “I have an API key” and “my agents work reliably at scale” is a configuration problem that most guides skip over entirely.

Read More

vLLM Recipe: RedHatAI/Qwen3.6-35B-A3B-NVFP4 on DGX Spark

This is a vLLM Recipe - a production-ready Docker Compose configuration for running open-weight models on local hardware. It documents the exact setup, configuration rationale, and benchmark results so you can get a model running quickly. You are welcome to change the parameters to suit your workloads. This worked for me, so I hope you find it helpful.

This recipe covers Qwen3.6-35B-A3B-NVFP4 - a Mixture-of-Experts model with 35B total parameters but only ~3B active at inference - quantized to NVFP4 by Red Hat AI and running on the NVIDIA DGX Spark (my GigaByte AI Top Atom) with a GB10 Blackwell GPU and 128 GB of unified CPU/GPU memory.

Read More

Self-Hosting Firecrawl on Ubuntu 25.04 with Docker Compose

Modern AI agents — Claude Code, Codex, OpenClaw, Hermes-Agent, and custom LangChain pipelines — need a way to read the web. Not raw HTML full of navigation debris, cookie banners, and JavaScript noise, but clean structured text that a language model can actually reason about. Firecrawl is the missing piece: an open-source web scraping and crawling API that fetches any URL and returns clean Markdown, ready to drop straight into a context window or a RAG pipeline.

Read MoreBuilding an Agentic Team for an Open Source Project with Claude Code

A core engineer on MemMachine — the one who owned the Semantic Memory subsystem — left the project. The codebase didn’t grow any less complex overnight, but the human attention available to maintain it did. That’s a familiar shape of problem in any open source project, and it’s the exact shape where a well-designed Claude Code agent team earns its keep.

This post documents what I built: a 22-agent maintenance team that lives entirely inside MemMachine’s repository, coordinates via Claude Code’s experimental Agent Teams runtime, and operates under a design I can reproduce for any existing repository with real code. The agents don’t push code, don’t sign commits, don’t merge pull requests, and don’t cut releases — humans still gatekeep every consequential action. What the agents do do is the tedious and error-prone middle of software maintenance: triage, spec drafting, implementation, QA, security review, docs, dependency and upstream tracking.

Read More

Using the API to Find Free Hosted Models on NVIDIA Builder

The NVIDIA Developer Program provides access to a wide catalog of AI models through NVIDIA Inference Microservices (NIM), offering an OpenAI-compatible API. You can browse and discover available models at build.nvidia.com/explore/discover .

If you want to find models with free hosted endpoints in the browser, you can enable the “Free Endpoint” filter

on the model catalog page. But what if you need that information programmatically – in a script, a CI pipeline, or as part of an automated workflow? The browser filter is not accessible through the API, and the /v1/models endpoint does not distinguish between free hosted models and everything else.

How I Created a Custom ChatGPT Trained on the CXL Specification Documents

If you’re working with Compute Express Link (CXL) and wish you had an AI assistant trained on all the different versions of the specification—1.0, 1.1, 2.0, 3.0, 3.1… you’re in luck.

Whether you’re a CXL device vendor, a firmware engineer, a Linux Kernel developer, a memory subsystem architect, a hardware validation engineer, or even an application developer working on CXL tools and utilities, chances are you’ve had to reference the CXL spec at some point. And if you have, you already know: these documents are dense, extremely technical, and constantly evolving.

Read More

I Turned Myself Into an Action Figure

Part of being in tech, especially in emerging memory technology, is constantly switching between the serious and the surreal. One day you’re in kernel debug mode, the next you’re explaining complex system architectures on a whiteboard, and then suddenly you’re jumping on the latest craze such as making yourself into an action figure.

It’s fun. It’s human. And honestly? It’s a reminder not to take yourself too seriously. (Even if your job title suggests differently)

Read More

An Introduction to Generative Prompt Engineeering

Introduction

Over the past few years, there has been a significant explosion in the use and development of large language models (LLMs). An LLM is a language model consisting of a neural network with many parameters (commonly multi-billions of weights), trained on large quantities of text. Some of the most popular large language models are: GPT-3 (Generative Pretrained Transformer 3) – developed by OpenAI ; BERT (Bidirectional Encoder Representations from Transformers) – developed by Google; RoBERTa (Robustly Optimized BERT Approach) – developed by Facebook AI; T5 (Text-to-Text Transfer Transformer) – developed by Google. Many others exist and continue to emerge. These language models are designed to understand and generate natural language text, allowing for a wide range of applications such as chatbots, content creation, language translation, and more.

Read More