Codex

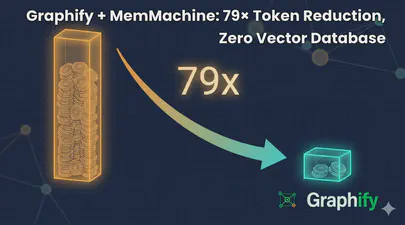

Graphify + MemMachine: 79× Token Reduction, Zero Vector Database

I help maintain MemMachine — an open-source long-term memory layer for AI agents. It’s a real codebase: 442 source files, 171 docs, a graph database, a SQL store, an MCP server, a REST API, a Python SDK, and integrations with eight different agent frameworks. When a new contributor asks “where does episodic memory actually get written?”, grep, the tool of choice for many AI coding assistants, doesn’t cut it. The answer threads through five files in three folders, plus a docker-compose service definition and a Helm chart. Each question you ask, it has to search all of these files, using the LLM to semantically understand the question and the files, then piece together an answer. This can take a lot of tokens and consume much of the context window.

Read More

Self-Hosting Firecrawl on Ubuntu 25.04 with Docker Compose

Modern AI agents — Claude Code, Codex, OpenClaw, Hermes-Agent, and custom LangChain pipelines — need a way to read the web. Not raw HTML full of navigation debris, cookie banners, and JavaScript noise, but clean structured text that a language model can actually reason about. Firecrawl is the missing piece: an open-source web scraping and crawling API that fetches any URL and returns clean Markdown, ready to drop straight into a context window or a RAG pipeline.

Read More